When we talk about the huge part artificial intelligence will play in our future, there's always that wag who warns us about the dangers of AI becoming sentient: Skynet, terminators, judgement day and all that other fun doomsday stuff.

Personally, I think that the possibilities presented by artificial intelligence are immense and exciting. The inexorable march of technology and innovation have historically been viewed with a mixture of awe and suspicion, and for good reason; but businesses that embrace AI will undoubtedly emerge the winners in the coming years.

Within my own business, (marketing, technology and communications), AI is the future. From self-driving cars to Google's search algorithm to Siri, artificial intelligence is here to stay. A large part of the appeal is that software can make decisions without the need for human input.

But as AI becomes omnipresent, we need to constantly ask: Are there certain decisions that only humans should make?

Rather than be worried about machines becoming sentient and enslaving us, perhaps we should be concerned about being over-eager to surrender the one thing a machine, by definition, can never have: our humanity.

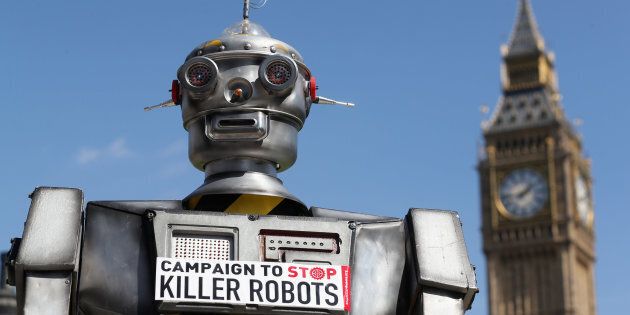

While this may be considered the realm of the tin-foil hat brigade, some of the world's brightest minds are already tackling this subject, having formed the Campaign to Stop Killer Robots.

Yes, that's seriously what they're calling themselves, and no they're not a bunch of crazy people or John and Sarah Connor cosplayers.

Headed up by Mary Wareham of Human Rights Watch, the campaign isn't worried about the potential for humanity to be crushed by droid overlords some time in the future -- their concern is robots shedding blood in the here and now.

The campaign primarily takes issue with "weapons that operate on their own without meaningful human control."

Basically, they are concerned about a future in which wars are fought by autonomous weapons. Think the drones that are already dropping bombs in the world's various theatres of war, except instead of a 'pilot' controlling the drone and making decisions from afar with regards to what to strike when, the machine will simply fly on its own and fire missiles based on its own real-time calculations.

And the 'future' the campaign foresees isn't as in next decade, next year, or even tomorrow. As CBS' 60 Minutes put it in January of this year, "it's already a military reality" on which the Pentagon are spending $3 billion annually.

"Some autonomous machines are run by artificial intelligence which allows them to learn, getting better each time", 60 Minutes reported.

"The potential exists for all missions considered too dangerous or complex for humans to be turned over to autonomous machines that can make decisions faster and go in harm's way without any fear."

What's worth adding is that anything that lacks fear also tends to lack empathy and compassion. Thus, the Campaign to Stop Killer Robots is calling "to ban weapons systems that, once activated, would select and attack targets without meaningful human control."

The basis for the campaign's concern is that since machines lack human judgment and don't understand context, they cannot make "complex ethical choices on a dynamic battlefield, to distinguish adequately between soldiers and civilians, and to evaluate the proportionality of an attack".

They're compelling arguments, but there's a sense that the proverbial horse has bolted. With the United States, China, Israel, South Korea, Russia, and the United Kingdom all having invested heavily in AI-powered weapons, it's doubtful an international consensus will be reached to abandon what must be hundreds of billions of dollars' worth of research and development on a project that may be ethically questionable but appears militarily sound.

Even if all the interested parties sat down to discuss the issue, all it would take is for one nation to hold out for the entire consensus to fall down. To paraphrase Aussie comedian Jim Jefferies, no country wants to bring a gun to a drone fight.

MORE ON THE BLOG:

Perhaps more pressing is the AI that is already being relied upon to make very human decisions.

Several states in the USA are using AI in the criminal justice system, the information provided by machines being used in sentencing decisions. This is being criticised on two fronts.

First, there are concerns regarding the transparency of the justice system when algorithms are being used. It's currently a hot topic in the state of Wisconsin, after Eric Loomis appealed his six-year sentence on the grounds he was denied the right to due process, as his sentence was informed by a software product called Compas.

Loomis' 2013 guilty verdict saw the judge tell him, "you're identified, through the Compas assessment, as an individual who is a high risk to the community".

Being that Compas is proprietary software, its algorithms are largely kept secret from the public, and while the judge maintained Loomis would have received the same sentence with or without Compas' input, how can Loomis be sure of that if he isn't permitted to see the report that determined the length of his stay behind bars?

Secondly, there is a strong argument to be made that some software being used is kind of racist. This is far more troubling.

ProPublica published a feature on the issue, which started by comparing the seemingly unrelated cases of 18-year-old Brisha Borden and 43-year-old Vernon Prater.

In 2014, Borden and a friend took a six-year-old's bike and scooter, collectively valued at $80, for a bit of a joyride in broad daylight and were charged with burglary and petty theft. A year earlier, Prater was busted for shoplifting tools valued at $86.35 from a hardware store.

As for their criminal records, Borden had committed misdemeanours as a juvenile, while Prater had done five years in prison for armed robbery.

So what happened when they were taken to jail to be booked?

"A computer program spat out a score predicting the likelihood of each committing a future crime", ProPublica reported. "Borden -- who is black -- was rated a high risk. Prater -- who is white --was rated a low risk."

As the story goes on to point out, two years later the system had been proven wrong, and pretty emphatically: "Borden has not been charged with any new crimes. Prater is serving an eight-year prison term for subsequently breaking into a warehouse and stealing thousands of dollars' worth of electronics."

A deeper analysis of the numbers available to ProPublica showed a troubling trend, with black defendants almost twice as likely to be pegged as re-offenders compared to white defendants.

It's worth noting that Northpointe, the company that created the software in question, disputed the findings. It's also worth noting that Northpointe are the brains behind Compas.

Ultimately, we're in the very early days of AI, and no one is claiming that it's perfect. The opportunities in numerous fields from communications to health to robotics are very exciting, but when smart people like Elon Musk, Bill Gates and Stephen Hawking publicly express concerns, the world should take note.

Rather than be worried about machines becoming sentient and enslaving us, perhaps we should be concerned about being over-eager to surrender the one thing a machine, by definition, can never have: our humanity.

ALSO ON HUFFPOST AUSTRALIA